the sensor substrate

The most consequential technology being built right now runs on sensor data. Figure is putting humanoid robots on factory floors. Tesla FSD is processing camera and radar telemetry across millions of miles. World Labs is building spatial intelligence from visual sensors. NVIDIA is running physics simulations that both consume and generate synthetic sensor data at industrial scale. K2 Space operates satellites that live or die by telemetry. Apple is building spatial computing on LiDAR and environmental sensing. Different companies. Different markets. Same substrate. High-fidelity data from physical systems is becoming the foundational layer of modern technology, and it is arriving at a volume and velocity that simply did not exist five years ago.

At Postmates, we created a network that was real-time and distributed, connecting millions of merchants, couriers, and customers. At Serve Robotics, I was building a system where telemetry was critical infrastructure and I watched very strong teams struggle to replay it, reason over it, learn, and train in sim-first environments at scale. Pendulum was a year of field research on neural net-based sensor fusion running on edge devices under electronic warfare conditions, where the signals themselves were contested and the models had to reason through degraded, adversarial inputs in real time. At Anduril, the stakes tightened to the centimeter in environments where a misread signal can cost a mission.

The pattern became obvious. The machines were getting dramatically more capable. The infrastructure to learn from what they generate was not keeping pace. What makes this moment different is that AI can now reason over physical system behavior in real time, on device. Not in a dashboard hours later. Not in a batch job someone runs on Thursday. At the point of operation, while the machine is running. That is a new paradigm. The gap between sensing and understanding starts to collapse. An anomaly that used to require a senior engineer to notice, investigate, and contextualize can now surface as structured evidence the moment it happens. I have seen the failure mode this replaces at every hardware company I have worked at. Physical systems fail because humans cannot process signal fast enough, cannot preserve institutional knowledge long enough, and cannot be everywhere at once across increasingly complex machines.

The senior validation engineer who knows why a specific anomaly matters on a specific vehicle is often the scarcest resource in the building. When that person leaves, the knowledge leaves with them. Real-time AI on sensor data does not replace those people. It multiplies the scarcest expertise in the industry. That is what we are building at Sift: turning raw sensor data into intelligence that physical systems can consume and make decisions against.

Sift closes $42M Series B

Sift is building the sensor intelligence platform for some of the most ambitious machines ever built. AI is moving into the physical world and into the product development process, so the constraint is no longer collecting data, it is turning signals into decisions fast enough to matter. The teams that win will build hardware like software with tight loops, reproducible outcomes, and compounding learning across the lifecycle.

In the official announcement, Sift shared that it closed a $42M Series B led by StepStone Group, with GV as its largest investor, bringing total funding to $67M. The company describes the problem clearly: software has tracing, logging, and metrics; hardware still too often runs on CSVs, one-off scripts, and institutional memory. That gap between what the machines know and what engineers can actually see is exactly the problem Sift is built to close.

Sift turns telemetry into ground truth for how machines interact with the physical world. What stands out in the announcement is not just the financing, but the specificity of the operating need: high-frequency sensor streams, multimodal telemetry, long-lived historical test data, and an infrastructure layer that makes all of it queryable and usable by both engineers and models. That is the missing layer for mission-critical hardware.

Sensor intelligence has been the consistent throughline in my career, increasing in importance, complexity, and consequence at every step. I watched sensor data quietly become mission-critical infrastructure for autonomous systems. More sensors, higher data rates, more complexity, and a tighter coupling between data and decision making.

What is different now is that this pattern is no longer isolated. It is becoming the default across robotics, autonomy, aerospace, manufacturing, and simulation. Physical AI systems are built on continuous perception and decision loops, and those loops are only as strong as the quality, context, and speed of the underlying sensor intelligence. This is where the leverage is, and it is pushing to the edge.

The bottleneck is converting raw data into intelligence that can be interrogated and acted on by humans and machines.

This is why I joined Sift. More on that soon.

Cause Strife. Chew Glass. Tokenize Chaos.

My late mentor taught me the leverage of applying these concepts across company divisions to ship better products from the inside out. When strong leads embrace this mode of building, it becomes a team sport like the ones I grew up playing. Convert noise into signal to surface tension and catalyze the system to evolve from the top down. Identify what’s not working and fix it. Turn volatility into structure through insight and data. Force the right conversations. It’s not about constant harmony. The process is deliberate and incremental, like building strength through resistance.

Three quarters focused on 40% efficiency lift and millions saved in data spend. I don’t view alignment as the absence of tension but as the product of processing it as a team.

At Postmates I grew to see chaos as simply unstructured data that is often emotionally charged, tokenize it, and combine strongly held opinions with data. Every point of friction is a packet of truth about how a system behaves under stress. Structure it and extract the insights hidden in the noise. Pattern analysis and root cause analysis going upstream creating a measurable system. The faster you identify tension and loop it back into the system, the faster the system evolves. Technical support, design reviews, ops syncs, user research, and strategic planning turn hidden friction into shared understanding. chaos is telemetry. Big fan of vertical integration and platforms for it. Thanks James

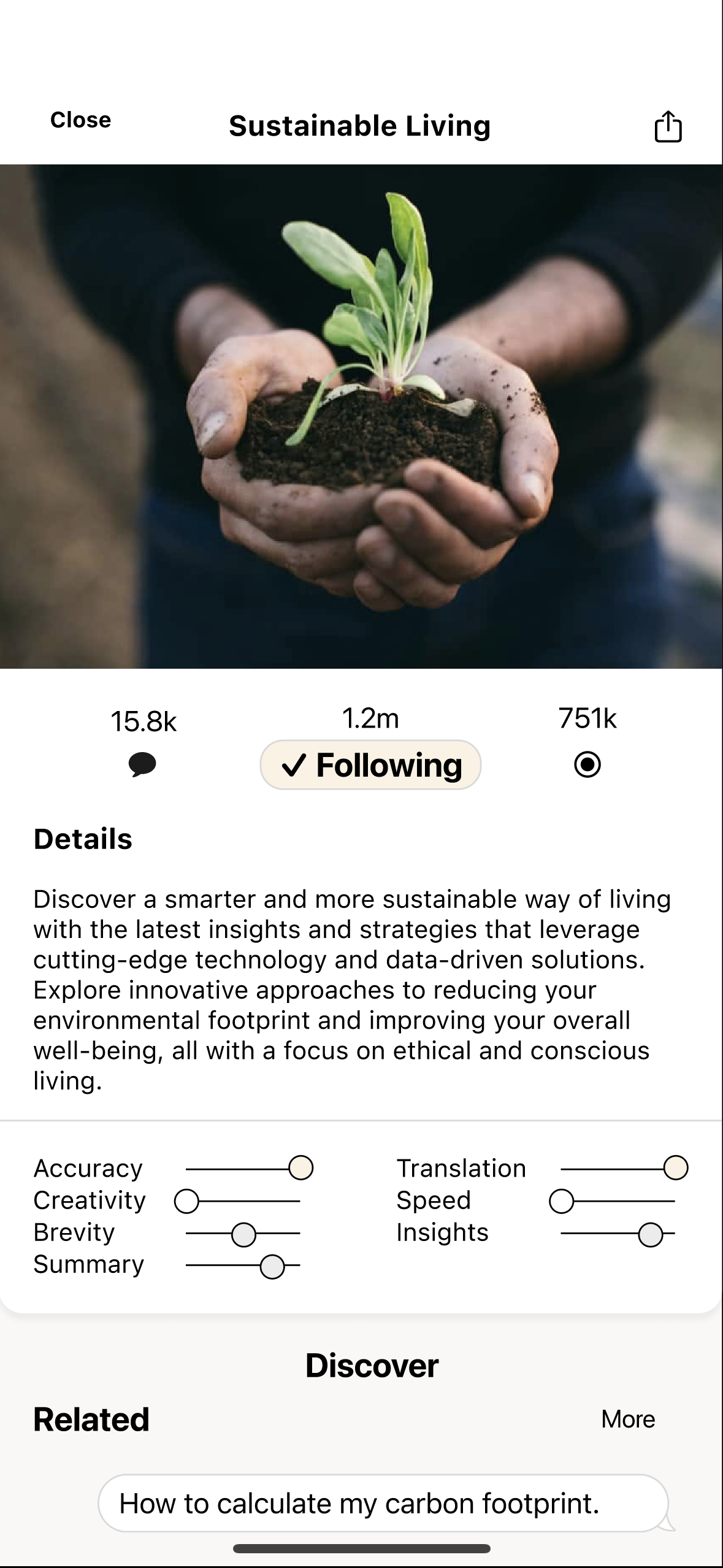

Multimodal AI research 2023

Research project on a personal AI assistant with a constantly evolving prompt library based on engagement across platforms like Reddit. While the community doesn’t directly interact, they benefit from the incredible new network effects of community without the social. The project utilizes iOS and SMS using Xcode, Twilio, Figma, and the GPT3.5 API.

spacetime

/GET EarthThe next generation of machines are computing across multiple dimensions, processing fused spatial, temporal, and ephemeral data in real-time at the edge. These systems sense, localize, navigate, and compute without relying on any single network or signal.

Building geospatial infrastructure connecting space to the edge has demonstrated this across UAVs, UUVs, USVs, and HUDs. The potential for a vertically connected ecosystem linking intelligence from satellites to cloud to edge is clear, no GPS required.